A critical new vulnerability has surfaced in LiteLLM.

CVE-2026-42208 is a pre-authentication SQL injection that with two requests and zero credentials can gain full control of the gateway that brokers every prompt, response, and provider key in the AI stack.

Miggo's research team was able to weaponize CVE-2026-42208 in our own lab, building an end-to-end exploit chain that recovers virtual API keys via the SQL injection, replays them against authenticated endpoints, and produces RCE inside the LiteLLM container.

We then mitigated that working exploit.

Crucially, while we generated precise WAF rules to block the initial SQL injection at the edge, Miggo’s in-app sensor detected the subsequent Remote Code Execution completely out-of-the-box. By identifying the underlying malicious behavior rather than relying on a CVE-specific signature, we validated our defense end-to-end before it reached a customer environment.

Every detection, every WAF rule, and every sensor signature was authored against a live, functional attack and validated end-to-end before it reached a customer environment.

This blog covers the whats, whys, hows and Miggo approach to handling CVE-2026-42208.

Why LiteLLM Is a Critical Target (CVE-2026-42208)

Adversaries are actively targeting exposed AI middleware. LiteLLM is one of the most widely deployed open-source AI gateways. It sits between an organization's applications and the upstream model providers, OpenAI, Anthropic, Bedrock, Vertex, Azure OpenAI, dozens more, translating calls into a unified interface, applying rate limits, logging usage, and routing traffic.

Three properties make it an unusually high-value target:

- It holds the keys to every model provider in the stack. A compromised LiteLLM proxy means stolen OpenAI, Anthropic, and cloud provider credentials — often with elevated quotas attached.

- It logs prompts and responses by default. That includes whatever sensitive data the application is shipping into the model: customer PII, internal documents, code, credentials pasted into copilots.

- It is overwhelmingly deployed to the internet. Most teams stand it up as a proxy at the edge so internal services and partner integrations can hit it. In production scans we routinely see LiteLLM instances answering on public IPs.

What's actually at risk

When the gateway falls, three blast radii open at once:

- Provider credential theft. OpenAI, Anthropic, Bedrock, and Vertex API keys, along with LiteLLM's own virtual keys, sit inside the gateway's database and container environment. Once read, they remain valid until rotated and most teams do not detect anomalous usage on a provider key for days.

- Prompt and response exfiltration. Conversation logs, embedded customer data, internal documents passed in for retrieval, and any secrets users pasted into copilots are recoverable from local storage and logs.

- Lateral movement into the application stack. LiteLLM is a privileged service: it speaks to identity providers, vector stores, internal microservices. An attacker on the gateway is one hop from the rest of the environment.

A compromise at the gateway is therefore not a single-application incident. It is a credential, prompt-data, and downstream service incident all at once.

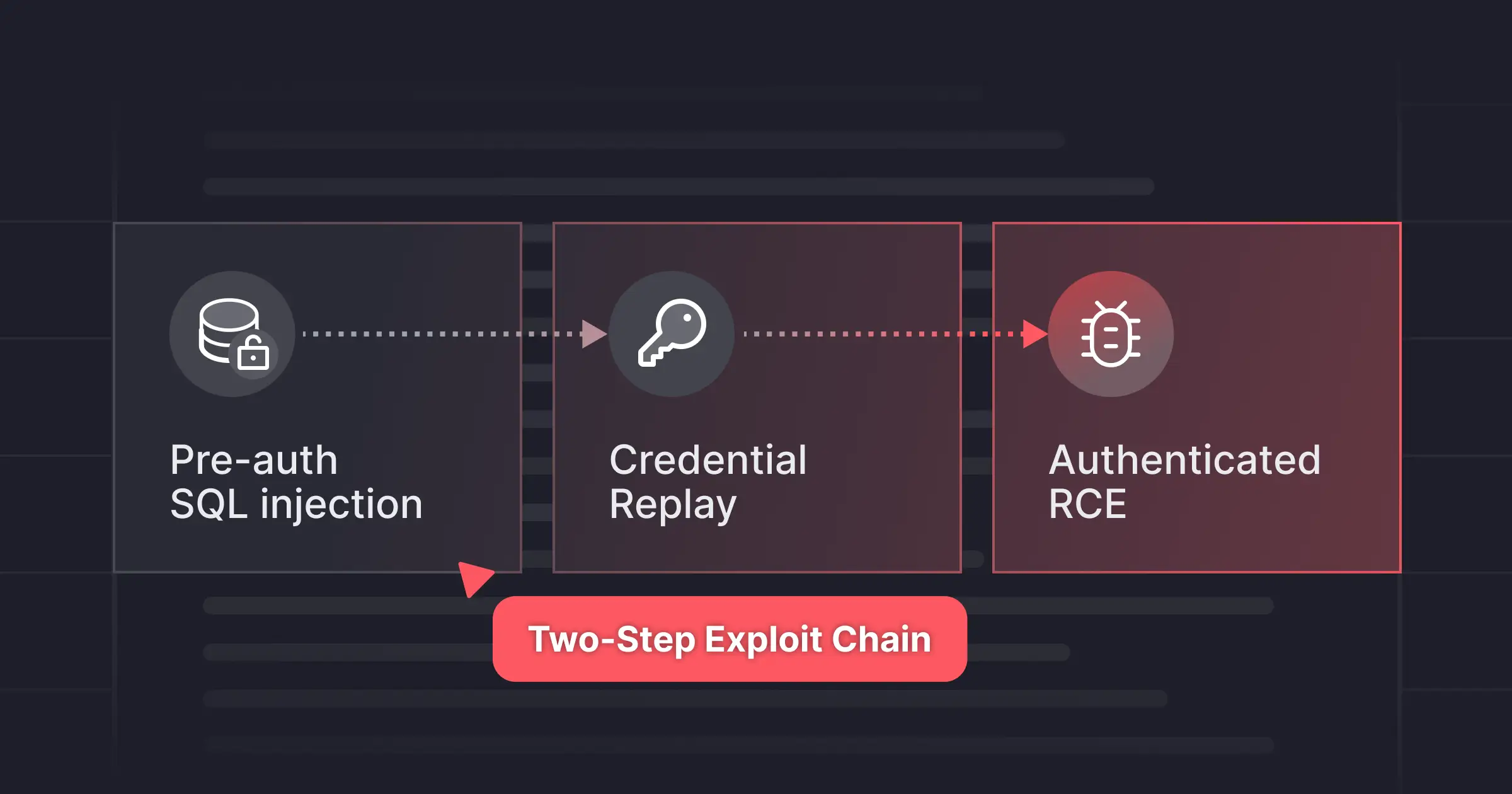

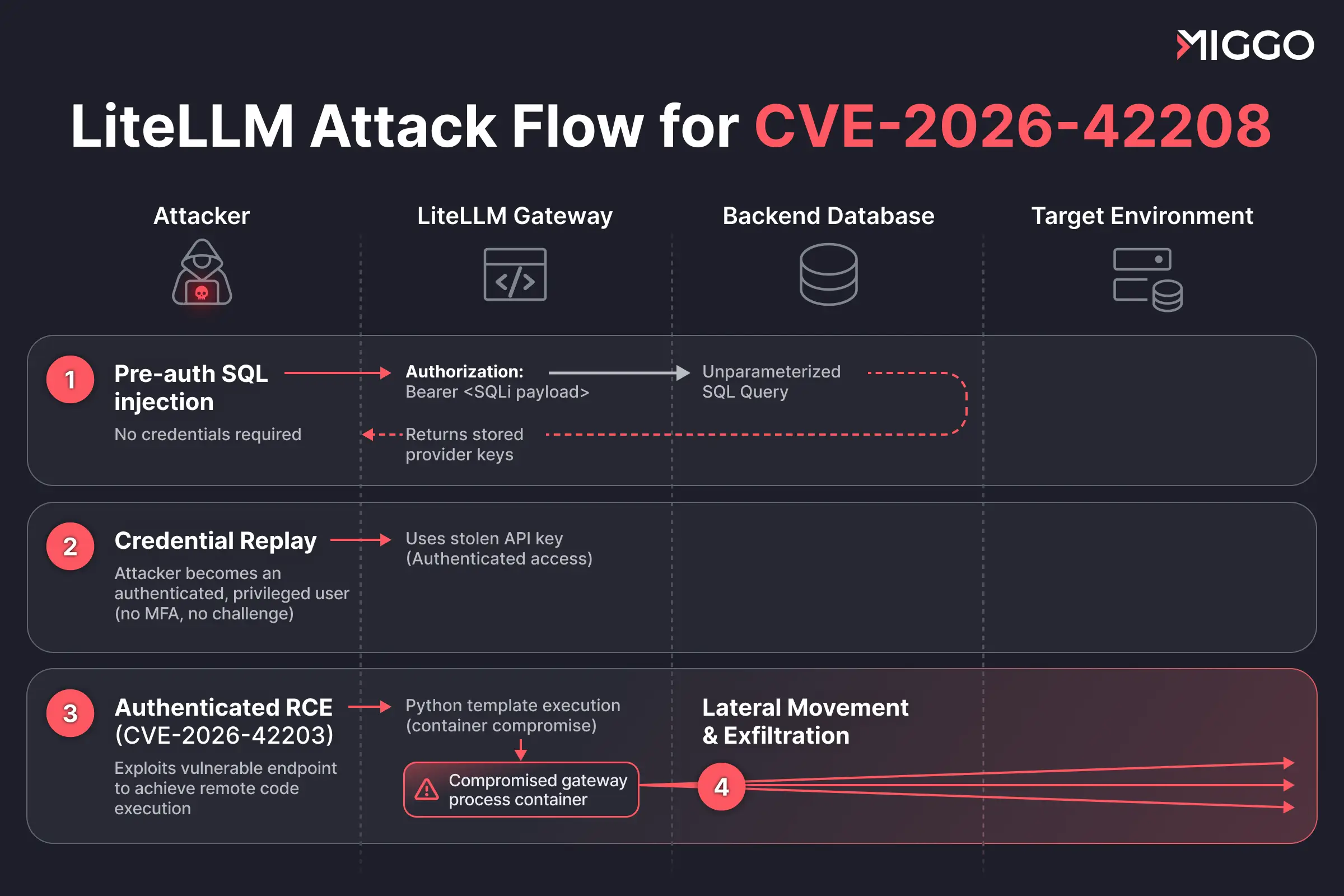

Technical Overview: The vulnerability chain in plain language

The attack path the public advisories enable is short and unforgiving.

Step 1: Pre-auth SQL injection (CVE-2026-42208). The proxy's authentication path concatenates the value of the Authorization: Bearer header into a SQL query against its backend database without parameter binding. An attacker who can reach the proxy on the network can inject arbitrary SQL without credentials, user interaction, or prior access required and read directly from the database, including the table where LiteLLM stores virtual API keys and provider credentials.

Step 2: Credential replay. With a recovered key in hand, the attacker is now an authenticated, privileged user of the gateway. No second factor needed, no further auth challenge.

Step 3: Authenticated RCE (CVE-2026-42203). The recovered credentials unlock authenticated endpoints that, when chained with the second advisory, produce remote code execution inside the LiteLLM container.

That is the chain: an unauthenticated attacker on the internet, two requests later, executing code inside the container that brokers every API key, every prompt, and every response in the AI stack. The window between disclosure and active exploitation is 36 hours and 7 minutes.

Miggo’s Approach: Walking the Walk for Defense in Defense

For most organizations running LiteLLM at the edge, this is a top-tier incident. And the 36-hour disclosure-to-exploit window means the patch alone is not a defense plan, it is a race. And for CVE-2026-42208, Miggo moves security teams from posture assessment to automated mitigation via AWS WAF within minutes.

1. See reachable exploitation paths in your environment

Posture assessments alone are insufficient. Miggo provides precise visibility on LiteLLM instances in your environment open to the internet and vulnerable to this specific RCE exploit path. We validate "Environment Truth”: is the vulnerability truly reachable? If it is, we show you exactly how the attacker will attempt to gain access via the authentication bypass.

2. Mitigation before ability to patch: Automated WAF rules

Automated WAF rule creation (by Miggo WAF Copilot) generates rules to stop CVE-2026-42208 and its variants and provides the technical logic behind them. Applying these rules at the correct network location blocks the SQLi and SSTI requests of the associated vulnerability chain without impacting normal application usage

How the WAF rule works

The rule has one job: catch CVE-2026-42208 exploitation in the Authorization header without touching legitimate traffic. It does that by chaining two conditions, both of which have to be true before the request is blocked.

1. The vulnerable path anchor. Valid LiteLLM virtual keys begin with sk-. The SQL injection only reaches the database query through an error-handling branch that fires when the Bearer token does not match that prefix. So the rule's first check is simple: is this a Bearer token that does not start with sk-? If yes, the request is on the vulnerable code path. If no, it is ignored.

2. The payload check. A malformed token alone is not an attack - users mistype keys all the time. So the rule then inspects the token for SQL injection syntax: single quotes, SQL keywords (SELECT, UNION, OR), time-based primitives (pg_sleep, WAITFOR DELAY).

Both conditions have to fire together.

The result is a rule pinned to the exact shape of the vulnerability. Legitimate keys never trigger it. Mistyped keys never trigger it. Only requests that are simultaneously on the vulnerable path and carrying SQL injection syntax get blocked - which is exactly the exploit, and nothing else.

3. Block the exploit for unknown vulnerabilities

Patches close one path. There is another layer that can block without the specific CVE signature.

Behavioral Boundaries (In-App Sensor): Miggo detected the exploit without knowing the specific CVE by Miggo tracking the Python application processes payloads and unfolding them into low-level behaviors. This chain of remote code execution relies on a technique called Server-Side Template Injection (SSTI) in Python Template Rendering.

.webp)

We stop unsafe template rendering at the boundary. Known chain or unknown variant, the exploit is stopped at the same line.

Immediate Actions

If you are using LiteLLM within your AI supply chain, your posture has changed, regardless of whether you have patched.

Miggo can help you block LLM attacks and also view your precise exposure to CVE-2026-42208. If reachable exposure is detected, Miggo will prompt you to automatically deploy the corresponding mitigation rule to your WAF within minutes, closing the patch gap before attackers can weaponize the exploit. See your environment now.